Introduction

Financial modeling is the process of creating a numeric representation of a financial situation- mostly, cash flow projection of a company or a project. Financial models are mostly used as a part of an assessment of financial projects, credit analysis and company valuation. Financial modeling is usually executed by using Microsoft Excel- a software which offers a lot of flexibility and advanced functions. Models range from very simple to extremely complex. A very simple model, such as the Gordon Growth Model, requires only 3 inputs in its basic version and can be constructed in less than 5 minutes.

Read Time: ~10 minutes

Content:

Financial models complexity

Modeling duration

Modeling in complexity layers

Consistency and mistakes check

Summary

Financial models complexity

The characteristics of the financial model you are creating should stem from its purpose and limitations. The most common uses, or the purposes to build financial models, are:

- FP&A, Financial Planning and Analysis: models used by economic departments in companies and institutions, mostly required to forecast budgets for various projects or operations.

- Valuation: models used by analysts to value companies and projects in order to provide investment recommendations.

- Credit Analysis: models used in financial institutions such as banks and investment firms to evaluate the downside risks of a company or project, used in the landing or investment in debt processes.

Those common uses of financial models have different limitations, which affect the potential complexity of the model:

| Limitation | FP&A | Valuation and Credit Analysis |

|---|---|---|

| Quantity of data | Usually a lot of very granular data collected by the company, depending on the data collection ability of the company. | Private company analysis: depends on the amount of data provided by the company analyzed, mostly not very granular data. Public company analysis: mostly public reports and industry analysis reports and data. |

| Quality of data | Mostly high quality historical data and relatively good micro-level projections, stemming from the experience of the company in its field. | High quality (audited) but limited historical data, micro-level projections vary by the experience and knowledge of the analyst and the data provided by the company analyzed. |

| Time | Usually enough time to create and maintain very complex models. | Analysts usually cover many companies, not enough time to create very complex models. |

We can see that FP&A models have less limitation compared to company valuation and credit analysis models, and so they tend to be more complex. The one thing to remember here is that the complexity of the model does not imply on its value to the user. This argument stems from two desirable but usually contradicting characteristics of a financial model:

- The model should represent the situation as accurately as possible.

- The model should be easy to understand, update inputs and make changes.

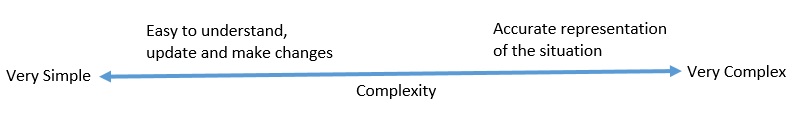

In a case without limitations (no data and time constraints), the complexity of the model can be described by the following axis:

We can also add limitations to this axis, as time constraints and lack of data will decrease possible complexity. As we said, the possible complexity will not always be the recommended complexity. The quantity of people who will use the model, the time to react to changes and the ability to maintain the model will affect its complexity. A very complex Project Finance model is useless if an external company, which may not always be available, is needed to analyze small changes in assumptions in a small project. Also, the situation in which only the creator of the model understands it, is not optimal to the organization.

The trust of the analyst and the decision making organs in the outputs of the model is also of vital importance. The chance of mistakes increases as the complexity increases. I will talk about methods to check for and avoid mistakes in one of the following paragraphs, but mistakes are always possible and will happen. An analyst will usually trust a model he created more than a model provided by a third party, even (and maybe due to) if the other model is much more complex. This is one of the reasons that Prof. Damodaran from the NYU Stern School of Business argues in his classes that analysts should be engaged and take ownership of their models. On the other hand, a very simple model, which does not include the major parameters which affect the performance of the project, will also not be trusted by the decision makers. This sums up the conflict of ease of use vs accuracy.

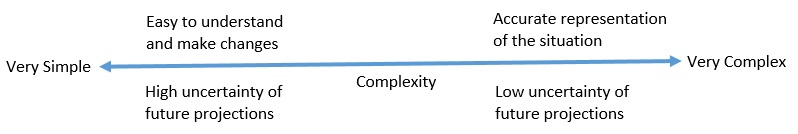

Another important parameter which affects the decision regarding the complexity of the model is the amount of uncertainty. Often, small changes to the inputs of a model can have a large effect on the results. If we are very uncertain regarding our assumptions of the future, increasing the number of inputs will not solve the uncertainty problem but only increase it. Prof. Damodaran referred to this issue in a blog entry: “When faced with more uncertainty, strip the model down to only the basic inputs, minimize the complexity and build the simplest model you can. Take out all but the key variables and reduce detail. I use this principle when valuing companies. The more uncertainty I face, the less detail I have in my valuation, recognizing that my capacity to forecast diminishes with uncertainty and that errors I make on these inputs will magnify as they percolate through the valuation”. In another paper dealing with valuation of young companies, he also suggests to use a relatively simple model to value startup companies- companies with no sales and a lot of uncertainty. This is the reason that you see very complex Project Finance models for infrastructure projects such as power plants, but very simple valuation models based on user multiples for startup internet companies. The saying “garbage in- garbage out” relates to this situation. Even if you build the most accurate model which incorporates all the possible inputs and assumptions, the output will be as uncertain as the input. We can add uncertainty to our “model complexity axis”:

Modeling Duration

The duration of the model also affects its complexity. The decision parameters regarding the duration of the model are:

- Purpose of the model.

- Characteristics of the situation modeled.

For FP&A models of projects, the duration will probably be the lifetime of the project. For credit analysis, the duration should be at least until the maturity of the loan/debenture, and often longer, if you assume the debt would be replaced by a new one. For valuation purposes, you may forecast cash flows during the planned holding period (for example, for real estate investment). Equity valuation is mostly forecasted for 3 to 10 years as the terminal value is calculated by using assumptions regarding a representative year. The decision regarding the forecasted period in equity valuation usually stems from the analyzed company’s stage in the company life cycle- models of mature companies will use less years compared to growth companies where the “steady state” year is distant.

Terminal value calculation, a part of an equity valuation, can be very material to the outcome of the model. As we determined, an equity valuation usually includes a projection of several years. The rest of the cash flows are valued by using the terminal value formula- the last projection year cash flow divided by the cost of capital minus the growth rate. Because of that, the terminal value often composes most of the total value of the equity. The growth rate and the cost of capital often draw the most of attention, but the cash flow assumption is as important- it should express the representative cash flow of the company in the long term.

Modeling in complexity layers

Building a financial model can be performed by using “complexity layers”- this method is very efficient when a time constraint is present, or when not all the data required is available. The logic is to create a very simple model, and then expand and add complexity to its various parts. Let’s think of an example using an apparel retail company. Our first and very basic model will include the items in the financial reports- revenues, cost of goods sold, selling and G&A expenses. The next step would be to expand on those items- for example, the revenues would be modeled by incorporating assumptions regarding store openings and closings and average revenue per store. The next layer of complexity would probably replace revenues per store by revenue per square feet, and existing and new stores would have assumptions regarding their average size, and so on and so on. In this method, you will have a model output very early, and as you add details, accuracy should improve. You should probably stop adding complexity then:

- You run out of relevant data. In our example, where is no sense to model average store size if that data is not available to you.

- You can’t make assumptions regarding the added layer. In our example, if you can’t make an assumption regarding the size of new stores and will assume it will be the same as old stores, then were is no need to add this to the model. This reason aligns with the topic of uncertainty- no need to add additional inputs if you cannot make reliable and smart assumptions for those inputs. The exception to adding those inputs is if they are very material to the outcome, and you want to analyze the sensitivity of the output to changes in those inputs.

- The model is becoming too complex and updates would require too much time. As we said in the beginning, complex models are hard to understand and maintain. If you are an equity analyst who should issue an update in the same day the company publishes its quarterly earnings, a hundred-input model which takes two days to update will not be very useful, no matter how theoretically accurate it is.

One of the ways to track complexity is to track the quantity of inputs. A good modeling system is to create a spreadsheet which contains all the inputs of the model, and add a reference to the input location near the calculation that use it in the various spreadsheets. It makes the model easier to update, and you can also see the assumptions used near the actual projection that uses it. By using this system you will eliminate duplicate inputs of the same variable and reduce the chance of mistakes and inconsistencies. Also, it will be very easy to count the quantity of inputs. If the model should be updated frequently and quickly, and you get to a hundred of different inputs, you should probably reduce complexity.

A good way to restrict the model from being too complex is to prepare a list of the parameters which affect the company before the actual modeling. After you create such a list, try to evaluate the ability to create forecasts for the various parameters. The influence of the parameters which you can forecast with a relatively high level of certainty is the most important to model- the amount of complexity will be restricted by the data you have.

The remaining parameters, which you cannot reliably forecast, should also be included in the model. Often, you will want to test the sensitivity of the outcome to changes in hard to predict parameters. This is relevant to both credit analysis and equity valuation. In credit analysis, you’ll want to know the case in which the company will not be able to repay debt.

Consistency and mistakes check

As complexity increases, the chance for modeling mistakes increases as well. Also, as we add more inputs, there is always a chance that several different inputs would be logically influenced by the same parameter, but we will not take that into account then updating the inputs. For example, in order to be consistent, the risk free rate is logically linked to the rate of long term growth. So, when updating the risk free rate, we need to remember that the long term growth assumption should also be reduced. The more inputs, the chance we forget or neglect to notice those connections are increased.

One of the ways to check for modeling mistakes and consistency errors are tracking the financial ratios. As we update the model, we should always track the changes in financial ratios and understand that caused it. For example, if we see that gross profit margins increase by 10% by the 5th year of the model, we must understand what inputs caused this change. One of the most important ratios to track in valuation models is the return on capital, or return on equity. Since those ratios are basically a calculation of other financial ratios, and their “normal” range is widely known, they serve as a good indicator of inconsistencies and mistakes.

Another way to deal with different inputs which are logically dependent on the same parameter is to identify those inputs and link them to it. So, for example, if we have an input of a long term interest rate for the debt of the company, instead of modeling it as a user input, model it as a risk free rate (linked to the risk free rate input) and a debt premium margin, which will be a user input.

Summary

Financial models range from very simple to extremely complex models. The complexity is dependent on the quality and quantity of available data, and time available to build and maintain the model. While making a decision regarding the complexity of the model, we need to take into account that as complexity increases, the model becomes less easy to understand and maintain, but usually more accurate in representing the situation. Also, the level of uncertainty and the ability to forecast the inputs should affect complexity- the more uncertainty, the less complex model is required, since the model is only as good as its inputs.